Vector Database Comparison 2026: Pinecone vs Weaviate vs Qdrant vs Milvus vs pgvector

Vector databases compared for 2026 - Pinecone, Weaviate, Qdrant, Milvus, pgvector, Chroma, LanceDB, Vespa. RAG fit, hybrid search, scale, pricing, and data residency for UAE AI deployments under CBUAE AI Guidance and PDPL.

Vector databases are the RAG-era storage layer - purpose-built for embedding similarity search at scale. Where traditional databases index integers, strings, and structured records, vector databases index dense high-dimensional vectors (typically 384, 768, 1024, or 1536 dimensions) and retrieve nearest neighbours via approximate-nearest-neighbour (ANN) algorithms like HNSW, IVF, or ScaNN.

This guide compares the 8 dominant vector databases in 2026 - Pinecone, Weaviate, Qdrant, Milvus, pgvector, Chroma, LanceDB, Vespa - on RAG fit, hybrid search, scale, pricing, and data residency for UAE AI deployments under CBUAE AI Guidance, PDPL, and DESC ISR v3.

What Vector Databases Actually Do

RAG (Retrieval-Augmented Generation) workflow:

- Chunk - split source documents into 200-1000 token chunks

- Embed - encode each chunk via embedding model (OpenAI text-embedding-3, Cohere embed-english, local bge-large, etc.) producing a dense vector

- Index - store vectors in a vector database with metadata (source URL, timestamp, author, etc.)

- Query - encode user question via same embedding model

- Retrieve - find top-K vectors nearest to query vector via ANN search

- Ground - feed retrieved chunks as context to LLM alongside user question

- Generate - LLM answers with retrieved context, ideally citing sources

Vector databases handle steps 3-5 at production scale. The quality of this layer directly determines RAG output quality - if retrieval is bad, no downstream LLM can recover.

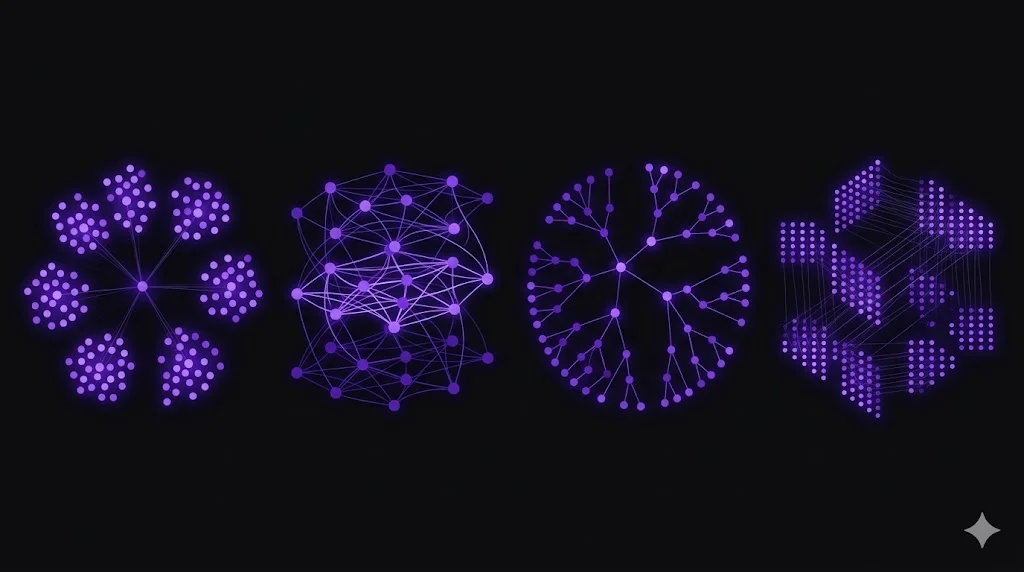

The 8 Vector Databases

Pinecone - The Managed SaaS Leader

Pinecone pioneered the commercial managed vector database category. In 2026 it remains the mindshare leader for production RAG.

Strengths:

- Fully managed - zero operational overhead; deploy a RAG feature without standing up infrastructure

- Strong RAG ecosystem - deepest integrations with LangChain, LlamaIndex, OpenAI, Anthropic

- Serverless architecture - pay per use; scale to zero

- Hybrid search (added 2024, maturing through 2026)

- Enterprise compliance - SOC 2, ISO 27001, HIPAA, GDPR

Trade-offs:

- SaaS-only - no self-host; data residency requires SaaS region selection

- Pricing escalates at scale

- Less filter depth than Qdrant or Weaviate historically

Fit: startups and enterprises wanting managed simplicity. Default choice if data residency isn’t a primary concern.

Weaviate - The Open-Source Veteran

Weaviate (2019 open-source project, commercial Weaviate Cloud) is the longest-standing production vector database.

Strengths:

- Open source - Apache 2.0; full self-host control

- Hybrid search - BM25 + vector native, among the best in the category

- Modules system - built-in text2vec modules for OpenAI, Cohere, HuggingFace, local models

- GraphQL API plus REST - expressive query language

- Multi-tenancy - strong tenant isolation for SaaS products built on Weaviate

Trade-offs:

- Operational complexity higher than Pinecone managed

- Java ecosystem (JVM-based) - memory footprint considerations at scale

Fit: teams wanting open-source + hybrid search; multi-tenant SaaS products; UAE enterprises with data residency requirements.

Qdrant - The Performance-Focused Rising Star

Qdrant (Rust-based, 2021 open source, commercial Qdrant Cloud) has gained significant 2024-2026 adoption as the performance-focused alternative.

Strengths:

- Excellent performance - Rust engine, HNSW with scalar quantization, fastest on many benchmarks

- Strong filtering - advanced payload filtering with zero-performance-cost

- Hybrid search - BM42 (Qdrant’s hybrid search) production-ready in 2024

- Sparse vectors - native support for sparse embeddings (useful for lexical search)

- Quantization - scalar, binary, product quantization for memory reduction at scale

- Open source - Apache 2.0

- Qdrant Cloud managed SaaS with competitive pricing vs Pinecone

Trade-offs:

- Newer than Weaviate (smaller ecosystem, though growing fast)

- Less feature-rich on GraphQL-style queries than Weaviate

Fit: performance-sensitive production RAG; teams wanting open-source + competitive-performance alternative to Pinecone; UAE enterprises with residency needs.

Milvus - The Petabyte-Scale Option

Milvus (CNCF, 2020 open source, commercial Zilliz Cloud) is the petabyte-scale distributed vector database.

Strengths:

- Massive scale - designed for billions of vectors with distributed architecture

- Multiple index types - HNSW, IVF, ANNOY, DiskANN - pick the right algorithm for the dataset

- Multi-vector / multi-modal support

- Kubernetes-native - Helm-based deployment

- Zilliz Cloud managed SaaS

Trade-offs:

- Operational complexity higher than simpler alternatives

- Overkill for smaller datasets

- Steeper learning curve

Fit: extremely large datasets (100M+ vectors); multi-modal search; organizations with strong Kubernetes operational capability.

pgvector - The “Just Use Postgres” Answer

pgvector is the Postgres extension adding vector similarity search. In 2024-2026 it matured significantly with HNSW index and the pgvectorscale extension from Timescale.

Strengths:

- Uses existing Postgres - no new storage system to operate

- Transactional consistency with application data in the same database

- Familiar tooling - pg_dump, pgbackrest, standard Postgres operations

- HNSW index (added 2023, matured through 2026) for fast ANN queries

- pgvectorscale (Timescale) adds StreamingDiskANN and auto-scaling for larger workloads

- Hybrid search via Postgres full-text (tsvector) + pgvector

Trade-offs:

- Scale limits vs dedicated vector databases (typically ~10-50M vectors efficiently; more with partitioning)

- Fewer specialized features (no native multi-vector models, no built-in embedding generation)

Fit: teams already on Postgres; simpler architectures; UAE enterprises wanting to minimize infrastructure count. Strong default first choice for new RAG projects under 10M vectors.

Chroma - The Developer-First Lightweight

Chroma is a developer-first vector database optimized for quick RAG prototyping.

Strengths:

- Easy to start - pip install, in-process or client-server

- Python-first ergonomics

- Great for prototyping - zero-config defaults

- Open source - Apache 2.0

Trade-offs:

- Not optimized for scale - production deployments typically migrate to Qdrant / Weaviate / Pinecone

- Smaller ecosystem than production-focused alternatives

Fit: early-stage RAG prototypes; developer notebooks; proof-of-concept work. Expect to migrate to a production-scale alternative before launch.

LanceDB - The Embedded Modern Alternative

LanceDB is a newer (2022) vector database with a modern architecture using the Lance columnar format.

Strengths:

- Serverless / embedded - runs in-process or as remote service

- Columnar format (Lance) - strong analytical query performance

- Multi-modal support - images, video, audio alongside text

- Strong AWS S3 / cloud storage integration - vectors stored as cloud files

- Rust engine with Python, TypeScript, Java SDKs

Trade-offs:

- Newer than alternatives; smaller community

- Fewer enterprise deployments to reference

Fit: teams wanting modern columnar architecture; multi-modal use cases; S3-backed vector storage patterns.

Vespa - The Yahoo Search Heritage

Vespa (Yahoo / Verizon Media spin-out) predates the vector database category - it’s a search engine that added vector search. Strongest multi-vector, multi-modal, and hybrid search capabilities.

Strengths:

- Best-in-class hybrid search - ranking models, BM25, vector, and learned-ranking combined

- Enterprise scale - Yahoo-scale search heritage

- Multi-vector per document - richer representation than single-vector alternatives

- Structured + unstructured query combination

Trade-offs:

- Steep learning curve - ranking profile configuration is complex

- Operational complexity

- Overkill for simple RAG

Fit: enterprise search scenarios combining complex relevance tuning with vector similarity; organizations with search engineering expertise.

Comparison Matrix

| Vector DB | Type | Open Source | Hybrid Search | Scale Ceiling | UAE Residency (Self-Host) | Best For |

|---|---|---|---|---|---|---|

| Pinecone | SaaS | - | Yes (2024+) | Large | Via region attestation | Managed simplicity |

| Weaviate | Both | Yes | Excellent | Large | Yes | OSS + hybrid + multi-tenant |

| Qdrant | Both | Yes | Strong (BM42) | Large | Yes | Performance + OSS |

| Milvus | Both | Yes (CNCF) | Yes | Petabyte | Yes | Extremely large scale |

| pgvector | Extension | Yes | Via Postgres FTS | Moderate | Yes | Existing Postgres shops |

| Chroma | Both | Yes | Basic | Small-Moderate | Yes | Prototypes |

| LanceDB | Both | Yes | Yes | Large | Yes | Columnar / multi-modal |

| Vespa | Both | Yes | Best-in-class | Petabyte | Yes | Complex enterprise search |

Choosing by Data Scale

Vector count is the most important selection criterion:

- Under 1M vectors: Chroma, pgvector, or any managed service work. Complexity not justified.

- 1M-10M vectors: pgvector with HNSW remains viable; Qdrant, Weaviate, Pinecone all excellent.

- 10M-100M vectors: Qdrant, Weaviate, Pinecone, or Milvus. pgvector with pgvectorscale can work but specialized DBs shine.

- 100M+ vectors: Milvus, Vespa, Pinecone Enterprise. Distributed architecture required.

- Billion+ vectors: Milvus or Vespa with dedicated engineering team. Custom sharding strategies.

For 2026 new RAG projects, most teams stay under 10M vectors by chunking aggressively and keeping vector counts controlled. Scale up only when retrieval quality demonstrably requires more granularity.

Hybrid Search: Table Stakes in 2026

Pure vector search misses exact-match content. A user searching “Kubernetes 1.32 changelog” wants the exact changelog page, not a semantically-similar Kubernetes 1.30 article. Pure lexical search misses paraphrased concepts. A user searching “how to make containers faster” wants content about “container optimization” even without that exact phrase.

Hybrid search combines both:

- Vector search captures semantic intent

- Lexical (BM25 / full-text) captures exact matches

- Reciprocal Rank Fusion (RRF) or weighted scoring combines them

For 2026 production RAG, hybrid search is not optional. Evaluate vector databases explicitly on hybrid search quality:

- Weaviate - mature hybrid, BM25 first-class

- Qdrant - BM42 hybrid search, strong 2024+ support

- Vespa - best-in-class hybrid ranking

- Pinecone - hybrid added 2024, improving

- pgvector - via Postgres full-text (tsvector); works but more manual setup

- Milvus - sparse + dense vector hybrid

- Chroma - basic hybrid; less mature

- LanceDB - vector + scalar filtering; hybrid via custom ranking

Integration with RAG Frameworks

Vector database choice affects RAG framework compatibility:

- LangChain: supports every vector DB in this guide

- LlamaIndex: supports every vector DB in this guide

- Haystack: supports most

- Cognita (TrueFoundry): supports most

- Native SDKs: each vector DB has Python / TypeScript / Java SDKs for direct integration

For greenfield RAG projects, pick the vector DB first, then pick the framework - all frameworks support all major vector DBs in 2026.

UAE Data Residency: The Critical Decision

For CBUAE Article 13 customer data, NESA CII, DESC ISR v3 government data, and PDPL personal data, vector database data residency is not optional.

Self-hosted options (Qdrant, Weaviate, Milvus, pgvector, Chroma, LanceDB, Vespa) provide full residency control when deployed in UAE-resident infrastructure:

- AWS me-central-1 (Dubai)

- Azure UAE North (Dubai) / UAE Central (Abu Dhabi)

- Oracle Cloud UAE

- Core42 sovereign cloud

- Stargate UAE

SaaS options (Pinecone, Weaviate Cloud, Qdrant Cloud, Zilliz Cloud, etc.) need explicit UAE / EU region attestation:

- Most have EU regions (typically Frankfurt or Ireland)

- UAE regions are less common in 2026

- Verify specific customer data class residency before procurement

For strictest residency (CBUAE Article 13 customer data in banks), self-hosted on UAE infrastructure is the cleanest path. The operational investment is significant but compliance evidence is unambiguous.

Recommended Stacks by Use Case

Early-stage AI startup (prototyping)

- Chroma or pgvector for first RAG implementation

- OpenAI embeddings (text-embedding-3-large)

- LangChain or LlamaIndex

- Annual cost: minimal

Mid-size AI product (production RAG, non-regulated)

- Pinecone for managed simplicity (USD 500-5,000/month)

- Or Qdrant Cloud for competitive alternative

- Hybrid search enabled

- Annual cost: USD 6-60k

UAE regulated enterprise (banks, fintechs, government)

- Self-hosted Qdrant or Weaviate on AWS me-central-1 / Azure UAE North / Core42

- Or pgvector on Azure Database for PostgreSQL UAE North if Postgres-native

- Hybrid search enabled

- Encryption at rest with customer-managed KMS keys

- Access controls integrated with Entra ID / IAM

- Backup to UAE-resident S3 / Blob

- Documented residency evidence for CBUAE / NESA / DESC audit

Massive-scale enterprise (100M+ vectors)

- Milvus or Vespa for distributed architecture

- Kubernetes-based deployment

- Strong observability integration (metrics + traces)

- Quantization to manage memory footprint

Evaluation: How to Test a Vector DB for Your Use Case

Test vector databases on your actual data, not vendor benchmarks:

- Embed your corpus with the embedding model you’ll use in production

- Create a golden query set - 100-500 real user queries with expected relevant documents

- Load into each candidate vector DB

- Measure retrieval quality - Precision@K, Recall@K, MRR for your golden set

- Measure performance - P95/P99 query latency at production-realistic QPS

- Measure operational burden - setup time, monitoring, backup, scaling

For UAE enterprises, also evaluate:

- Data residency evidence (audit-grade documentation)

- Encryption at rest + in transit

- Integration with UAE-resident KMS

- Backup and disaster recovery patterns

- Vendor compliance attestations (SOC 2, ISO 27001, HIPAA where relevant)

aiml.qa’s engagements include this evaluation as part of RAG readiness assessments.

How aiml.qa Delivers

aiml.qa runs RAG evaluation and vector database selection engagements as fixed-scope sprints:

- 5-day RAG Readiness Assessment - evaluates current or planned RAG architecture; benchmarks vector database candidates against your corpus and queries; produces selection recommendation with UAE compliance analysis

- 2-4 week RAG Evaluation Suite Implementation - deploys RAGAS + DeepEval + custom metrics; establishes continuous retrieval quality monitoring; integrates with production observability

- Ongoing AI Product QA Retainer - monitors RAG quality over time, detects retrieval drift, recommends tuning

For CBUAE-regulated deployments, engagements explicitly map evaluation artefacts to CBUAE AI Guidance model-governance requirements.

Book a free 30-minute discovery call to scope your RAG evaluation engagement with aiml.qa.

Related Reading

- LLM Evaluation Framework Benchmark 2026 - DeepEval, RAGAS, Promptfoo for evaluation of RAG output quality

- Running vLLM on Kubernetes in UAE - LLM inference serving for RAG applications

- CBUAE AI Guidance for UAE Banks - model governance including RAG vendor DD

- AI Agent Framework Comparison - agents consuming RAG via vector databases

Frequently Asked Questions

What is the best vector database in 2026?

No single vector database leads across every dimension. For enterprise managed SaaS with strongest RAG ecosystem: Pinecone. For open-source self-hosted with hybrid search: Qdrant or Weaviate. For already-on-Postgres teams: pgvector with pgvectorscale for scale. For lightweight developer-first RAG prototypes: Chroma or LanceDB. For petabyte-scale multi-modal: Milvus or Vespa. Most production RAG deployments in 2026 converge on Qdrant or Pinecone - with a growing contingent on pgvector for simpler architectures.

Pinecone vs Weaviate vs Qdrant - which should I use?

Different strengths. Pinecone is the managed SaaS leader with the easiest operational path and strongest RAG ecosystem integrations. Weaviate is the longest-standing open-source with full-text + vector hybrid search built-in and strong Python/TypeScript SDKs. Qdrant is the performance-focused open-source with excellent filtering, Rust-based engine, and strong hybrid search. For managed simplicity: Pinecone. For open-source self-hosted with UAE residency: Qdrant or Weaviate. Qdrant has slightly stronger adoption momentum in 2026.

Is pgvector production-ready for RAG?

Yes, for most RAG use cases. pgvector has matured significantly through 2024-2026 with pgvectorscale (Timescale) and halfvec / HNSW improvements. Strong fit when you already run Postgres and want to avoid a separate vector database. Performance for moderate datasets (under 10M vectors with filter predicates) is excellent. For very large datasets (100M+ vectors) or complex multi-tenant patterns, dedicated vector databases (Qdrant, Pinecone) typically outperform. For UAE enterprises already running Postgres (RDS, Azure Database for PostgreSQL, Oracle), pgvector dramatically simplifies architecture.

What is hybrid search and which vector DBs support it?

Hybrid search combines vector similarity (semantic) with lexical search (BM25 or full-text) to capture both semantic intent and exact keyword matches. Critical for most production RAG because pure vector search misses product names, IDs, and specific terminology while pure lexical misses paraphrased concepts. Support quality: Weaviate (excellent built-in BM25 + vector), Qdrant (BM42 hybrid in 2024 GA), Vespa (native hybrid), Elasticsearch + vector (mature but complex), Pinecone (added hybrid in 2024; growing), pgvector (vector + Postgres full-text via tsvector). For production RAG, hybrid search is non-negotiable.

How much does Pinecone cost?

Pinecone pricing is usage-based in 2026: Starter tier free up to limited scale; Standard tier usage-based per read / write / storage; Enterprise tier with dedicated infrastructure and compliance features. Typical spend for production: USD 100-1,000/month for mid-size RAG (1-10M vectors, moderate query volume), USD 5-50k/month for large enterprise (100M+ vectors, high QPS). Self-hosted alternatives (Qdrant, Weaviate, pgvector) have near-zero licence cost but require operational investment.

Can I use Postgres alone for vector search instead of a dedicated vector DB?

Yes, via pgvector extension. For vector counts under 10M with standard RAG query patterns, pgvector with HNSW index delivers production-grade performance. Combine with Postgres full-text search (tsvector) for hybrid search. Benefits: no new storage system, transactional consistency with your application data, familiar ops. Trade-offs: limits at very large scale, fewer specialized features (multi-vector models, advanced filtering, managed scaling). For most UAE enterprises starting RAG, pgvector is the simplest first choice - migrate to specialized if you hit limits.

Which vector databases satisfy UAE data residency requirements?

For CBUAE Article 13 customer data residency, NESA CII, and strict DESC ISR v3 interpretations: self-hosted options (Qdrant, Weaviate, Milvus, pgvector) deployed in UAE-resident infrastructure (AWS me-central-1, Azure UAE North, Oracle Cloud UAE, Core42, Stargate UAE) provide full residency control. SaaS options (Pinecone, Weaviate Cloud, Qdrant Cloud) need explicit UAE / EU region attestation - most have EU options, UAE regions are less common. For maximum residency certainty, self-hosted + UAE infrastructure is the cleanest path.

Which vector database is best for enterprise RAG with compliance requirements?

For CBUAE-regulated UAE banks, DFSA-regulated DIFC firms, and similarly-regulated enterprises: self-hosted Qdrant or Weaviate on UAE-resident infrastructure is the typical 2026 choice. Add pgvector for auxiliary vector data that naturally lives in Postgres. For non-residency-sensitive workloads, Pinecone's enterprise tier with EU region is viable. Document: deployment architecture, data residency evidence, access controls, encryption at rest, backup policy, and evaluation evidence per CBUAE AI Guidance vendor due diligence requirements.

Complementary NomadX Services

Ship AI You Can Trust.

Book a free 30-minute AI QA scope call with our experts. We review your model, data pipeline, or AI product - and show you exactly what to test before you ship.

Talk to an Expert