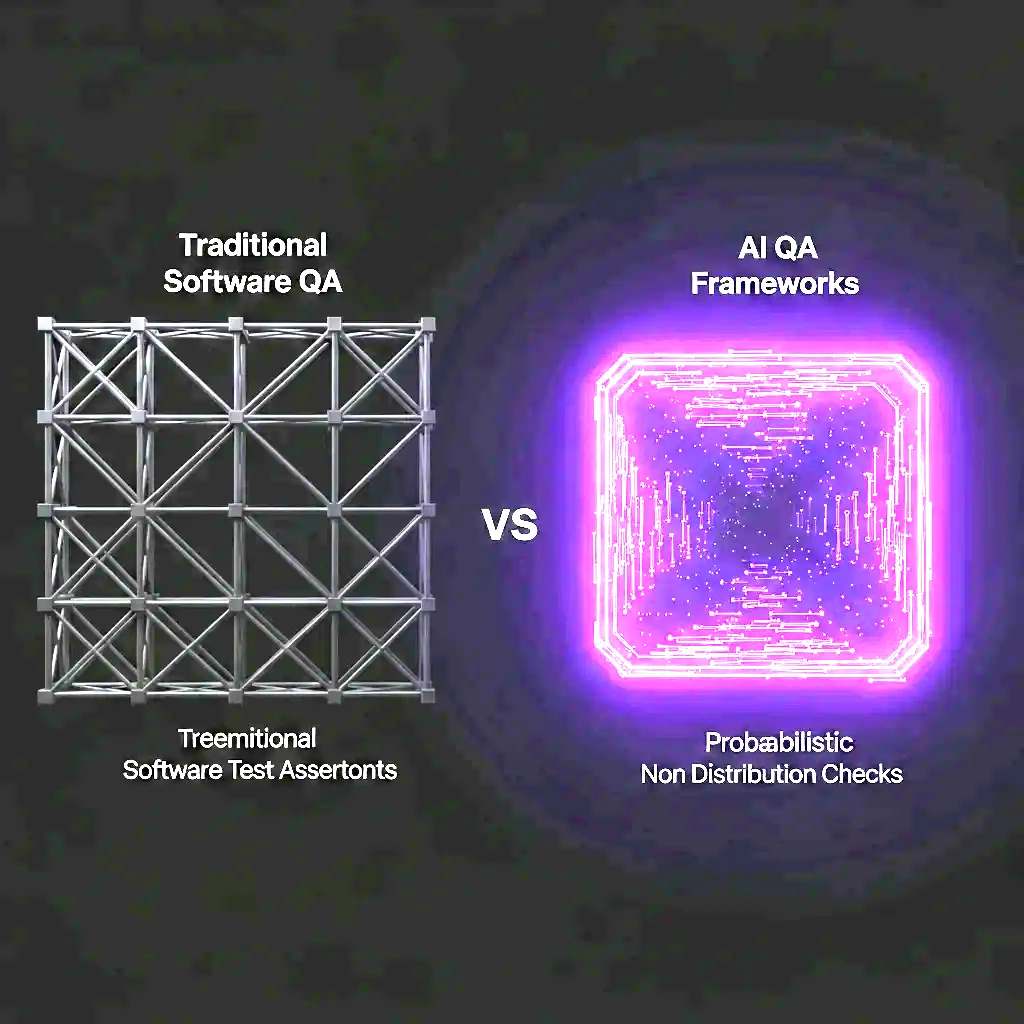

AI QA vs Traditional Software QA: What's Different

The five fundamental differences between AI QA and traditional software QA - why standard testing teams fail at AI, and what the AI QA discipline requires.

AI/ML systems are not software in the sense that traditional QA was designed to test. They share infrastructure with software - they run on servers, they have APIs, they respond to inputs - but their behaviour is fundamentally different in ways that make standard QA methodologies inadequate.

Here are the five differences that matter most.

1. Non-Determinism

Software: Given input A, a function always returns B. Testing is about verifying B.

AI/ML: Given input A, a model returns B most of the time, and C some of the time, and D occasionally. The variance is inherent to the system. A test that passes once is not evidence that it will pass again.

What this means for QA: AI testing requires statistical approaches. Instead of “does this test pass?”, the question is “what is the pass rate of this test over N runs?” Evaluation sets replace unit test cases. Confidence intervals replace binary pass/fail.

2. Probabilistic Correctness

Software: An output is correct or it isn’t. Correctness is usually binary and unambiguous.

AI/ML: Correctness is probabilistic and context-dependent. Is a response that is 90% accurate correct? Is a response that is factually accurate but not useful correct? Is a response that satisfies the requirement 95% of the time acceptable?

What this means for QA: AI QA requires agreed acceptance criteria expressed as performance thresholds - not binary pass/fail. “The hallucination rate must be below 3% on the domain evaluation set” is an AI QA acceptance criterion. “The model output matches the expected output” is not meaningful.

3. Data Dependency

Software: The code is the system. The same code, the same behaviour.

AI/ML: The model is a function of the training data. Different training data produces different model behaviour. The data is as much “the system” as the code.

What this means for QA: AI QA must cover the data layer, not just the model layer. Data quality audits, training/test split validation, distribution shift detection - these are AI QA disciplines with no equivalent in traditional software testing.

4. Drift

Software: Software does not degrade on its own. A function that works in January still works in December with the same inputs.

AI/ML: Models drift. As the real-world distribution of inputs shifts away from the training distribution, model performance degrades - silently, gradually, and without any change to the model code.

What this means for QA: AI QA is not a pre-deployment gate. It is a continuous process. Models require ongoing monitoring, periodic re-evaluation, and systematic retraining cycles. A model that passed QA six months ago may be failing QA today.

5. Emergent Behaviour

Software: A function does what the code says it does. Unexpected behaviour is a bug.

AI/ML: Large language models exhibit emergent behaviours that were not explicitly programmed and were not present in smaller model versions. Safety properties that held in one context may not hold in another. A model that behaves correctly on your test set may behave incorrectly in ways you haven’t tested.

What this means for QA: AI QA must include adversarial evaluation - red-teaming that actively searches for failure modes outside the anticipated test cases. Systematic testing of known vulnerability categories (OWASP LLM Top 10) is a minimum baseline.

What AI QA Requires

AI QA is a distinct discipline from software QA. It requires:

- Evaluation methodology: Statistical testing, evaluation sets, threshold-based acceptance criteria

- Bias and fairness expertise: Demographic parity, equalized odds, fairness metrics appropriate to the domain

- Adversarial testing: Red-teaming, prompt injection, jailbreak surface mapping for LLM systems

- Data quality assessment: Training data audits, distribution analysis, PII detection

- MLOps knowledge: Pipeline testing, drift monitoring, rollback verification

Most software QA teams have none of these capabilities. Bringing them in-house requires hiring or training a specialist. Buying them from a pure-play AI QA firm is typically faster and more cost-effective for Series A–C startups.

Book a free AI QA scope call to discuss the specific AI QA gaps in your current testing process.

Ship AI You Can Trust.

Book a free 30-minute AI QA scope call with our experts. We review your model, data pipeline, or AI product - and show you exactly what to test before you ship.

Talk to an Expert